Keywords matter. When you enter a search in Google or a prompt in Gemini, you expect the answer to reflect the question. Consider an extreme example. If you entered a search for “best suvs of 2026,” would you expect to see the result below?

Of course not – the 13th President of the US clearly has nothing to do with SUVs in 2026, no matter how authoritative Wikipedia is or how well they manage their SEO.

What if you searched for “top high-end sport utility vehicles,” and got this result?

Would you be surprised? Before you answer, consider this – the title of that page doesn’t mention “top,” “high-end,” or “sport utility vehicles,” and yet it still ranks. As humans, we understand intuitively that these two phrases represent very similar ideas.

As SEOs, we know that advances in machine learning (ML) and natural-language processing (NLP) are allowing Google to understand semantic similarity and word meaning better with each passing year.

All of this begs a much more complicated question …

Are you winning AI search?

See how you stack up against competitors right now!

How much do keywords matter?

Google has had some ability to understand synonyms for years now. Consider this search for “cell phone” in 2012 (via the Internet Archive):

Google clearly recognized that “mobile phone” and “cellular phone” were good matches. This was about a year before the Hummingbird update and text embeddings launched in Google, and a decade before publicly available large-language models (LLMs).

Along with Google’s capabilities, searchers themselves have evolved and are more inclined to use natural language. In 2026, you‘re less likely to search for “smartphone” and more likely to search for something like “What's the best budget android phone with a good camera?” You expect Google to correctly interpret that more complex question.

So, how much has Google improved, and can we measure that improvement?

1,000 long-tail queries / 8,703 results

We chose to use a research corpus of 1,000 “long-tail” queries built specifically for prompt-tracking and spanning 20 industry categories. Here are a few sample queries:

- “What metrics should I track for e-commerce success?”

- “Is it better to work out in the morning?”

- “Do streaming services track what I watch offline?”

We ran these as Google/US/desktop searches and looked specifically at page-one organic results. This yielded 8,703 organic results and display titles (note that, due to SERP features, page one may contain less than ten organic results).

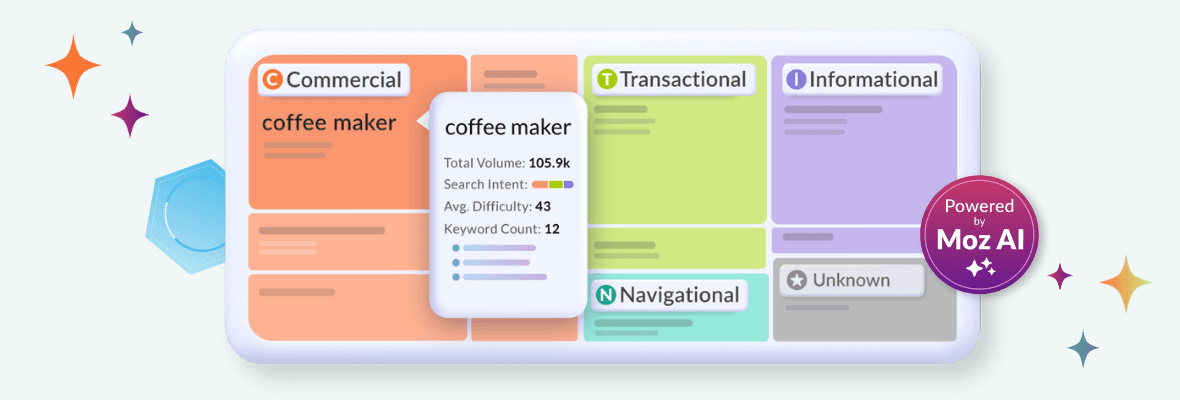

Cluster content by intent to prevent keyword cannibalization

Automatically group related keywords to uncover, cluster, and prioritize terms in Keyword Explorer with Moz Pro

Three ways to measure similarity

To better understand Google’s current capabilities, we compared the queries to organic result titles (as displayed by Google, not <title> tags) using three metrics: (1) exact-match*, (2) partial match with Jaccard similarity, and (3) semantic match with cosine similarity.

1. Exact-match*

Exact-match is pretty self-explanatory, but we chose to be a bit forgiving, normalizing case and punctuation, removing plurals, and allowing any title that contained the full query.

2. Jaccard similarity

To analyze partial matches, we used Jaccard similarity, which measures the number of shared elements (in this case, words) across two sets vs. the unique elements of both sets. Put simply, it’s the proportion of shared words across the two strings to the total, unique words. This is measured on a 0.0-1.0 scale.

3. Cosine similarity

Finally, we calculated vector embeddings and cosine similarity between the two strings. This captures semantic relationships – in a word, “meaning.” Specifically, we used 768-dimensional Nomic embeddings. Cosine similarity also measures similarity on a 0.0-1.0 scale. Let’s look at the stats and some examples.

Exact-match data and examples

Even with our more forgiving exact-match*, only 43 display titles (0.49%) contained the full query. Here’s an example that only differs by a hyphen (-):

… and here’s an example where the title includes the query and a bit more:

Flipping that first statistic, 99.51% of display titles did not contain the full query. Given the data set of long-tail queries, I don’t think this will shock most of you, but it does illustrate how much SEO has evolved since the keyword-stuffing days.

Partial match / Jaccard similarity

Here’s where things get more interesting. The mean Jaccard similarity for the 8,703 display titles was 0.23 (note that Jaccard similarity is pretty unforgiving). To put that in context, here’s what a 0.23 score actually looks like:

I’ve highlighted the matching words – as you can see, the mean value represents a pretty limited overlap. Here’s a higher Jaccard score (0.75) that isn’t an exact-match:

Putting aside word-order (which Jaccard ignores completely), this is a substantial overlap. Note that a true exact-match would also have a Jaccard similarity of 1.0.

Semantic match / cosine similarity

The mean value of cosine similarity across the data set was 0.76 – cosine similarity is much more forgiving than Jaccard similarity. Here’s an example of a 0.76:

This one’s interesting because the display title is a much more structured, SEO-style title. While it’s specific to Minecraft and maybe not quite what the searcher intended, we can certainly see that there’s semantic overlap. Let’s look at a high-similarity example:

This result has a cosine similarity of 0.90, and you can see that the vector embeddings are probably equating concepts like “US” and “America” and ignoring some of the minor differences (like “8 of the”) that don’t impact the relevance to the query.

Bonus: high cosine, low Jaccard

A word-for-word exact-match is going to be ones across the board, and high Jaccard similarity almost always means high cosine similarity. What about cases where the word overlap is low but the semantic overlap is high?

This match-up has a cosine similarity of 0.91 but a 0.10 Jaccard similarity. Only the word “car” overlaps. As humans, we can pretty easily equate these two phrases, but, more importantly, Google is getting better at mimicking that human intuition.

Here’s another example, where cosine similarity is 0.82 but Jaccard similarity is zero:

Technically, there’s no word overlap here. While some of these words (e.g. “recycled” vs “recycling”) have the same root, and we all know that EV means electric vehicles, these comparisons were historically challenging for machines.

Let’s loop back to that “what causes a car to overheat?” example and look at three organic results. There’s something really interesting going on beyond the semantic similarity of the titles. Notice the bold text (which I’ve highlighted) in the search snippets:

Google isn’t just recognizing synonyms and semantic similarity here – they’re actually highlighting possible answers. While this highlighting is a layer that happens after results are retrieved and ranked, it’s worth exploring in your own search results to understand the kind of information that Google is rewarding.

Keyword targeting in 2026-2030

Keywords matter, but thankfully, the days of rewarding keyword stuffing are behind us. While display titles are only a microcosm of how Google determines relevance, these results support our intuition: Google is becoming better at understanding not only partial matches and simple synonyms, but also semantic similarity.

Semantic similarity using vector embeddings and cosine similarity isn’t just a useful proxy — we know it’s a fundamental part of how Google search works, going back more than a decade. It’s even baked into FastSearch, which powers search-grounding for Gemini. Vector embeddings are one approach to measuring relevance. While we shouldn’t fixate on or over-optimize for any one metric, these tools can help us understand how Google maps semantic relationships and think more flexibly about relevance.

While I believe these developments are good for both searchers and SEOs, we have to take a much broader view of keyword targeting. We’re not just writing — and competing — for a small set of exact-match keywords, but for broader clusters of similar phrases and similar meanings. As search becomes a hybrid of organic and GenAI, this trend will only accelerate. Our tools and metrics need to evolve to meet the challenge.